Metaformer Is Actually What You Need For Vision

Metaformer Is Actually What You Need For Vision - The paper shows that metaformer is. It achieves competitive performance on. Metaformer is a general architecture abstracted from transformers without specifying the token mixer. Based on the extensive experiments, we argue that metaformer is the key player in achieving superior results for recent transformer and mlp. Metaformer is a general architecture abstracted from transformers without specifying the token mixer. Based on the extensive experiments, we argue that metaformer is the key player in achieving superior results for recent transformer and mlp. Based on the extensive experiments, we argue that metaformer is the key player in achieving superior results for recent transformer and. Metaformer is a concept that abstracts from transformers without specifying the token mixer module. The paper shows that metaformer.

Metaformer is a general architecture abstracted from transformers without specifying the token mixer. Metaformer is a concept that abstracts from transformers without specifying the token mixer module. Metaformer is a general architecture abstracted from transformers without specifying the token mixer. It achieves competitive performance on. The paper shows that metaformer. The paper shows that metaformer is. Based on the extensive experiments, we argue that metaformer is the key player in achieving superior results for recent transformer and mlp. Based on the extensive experiments, we argue that metaformer is the key player in achieving superior results for recent transformer and. Based on the extensive experiments, we argue that metaformer is the key player in achieving superior results for recent transformer and mlp.

Metaformer is a concept that abstracts from transformers without specifying the token mixer module. The paper shows that metaformer. The paper shows that metaformer is. Based on the extensive experiments, we argue that metaformer is the key player in achieving superior results for recent transformer and mlp. Metaformer is a general architecture abstracted from transformers without specifying the token mixer. Based on the extensive experiments, we argue that metaformer is the key player in achieving superior results for recent transformer and mlp. It achieves competitive performance on. Based on the extensive experiments, we argue that metaformer is the key player in achieving superior results for recent transformer and. Metaformer is a general architecture abstracted from transformers without specifying the token mixer.

Table 2 from MetaFormer is Actually What You Need for Vision Semantic

Based on the extensive experiments, we argue that metaformer is the key player in achieving superior results for recent transformer and mlp. Metaformer is a concept that abstracts from transformers without specifying the token mixer module. Based on the extensive experiments, we argue that metaformer is the key player in achieving superior results for recent transformer and. The paper shows.

MetaFormer is Actually What You Need for Vision DeepAI

Metaformer is a general architecture abstracted from transformers without specifying the token mixer. Metaformer is a general architecture abstracted from transformers without specifying the token mixer. Metaformer is a concept that abstracts from transformers without specifying the token mixer module. The paper shows that metaformer. Based on the extensive experiments, we argue that metaformer is the key player in achieving.

MetaFormer Is Actually What You Need for Vision Papers With Code

The paper shows that metaformer is. Metaformer is a general architecture abstracted from transformers without specifying the token mixer. The paper shows that metaformer. Based on the extensive experiments, we argue that metaformer is the key player in achieving superior results for recent transformer and. Based on the extensive experiments, we argue that metaformer is the key player in achieving.

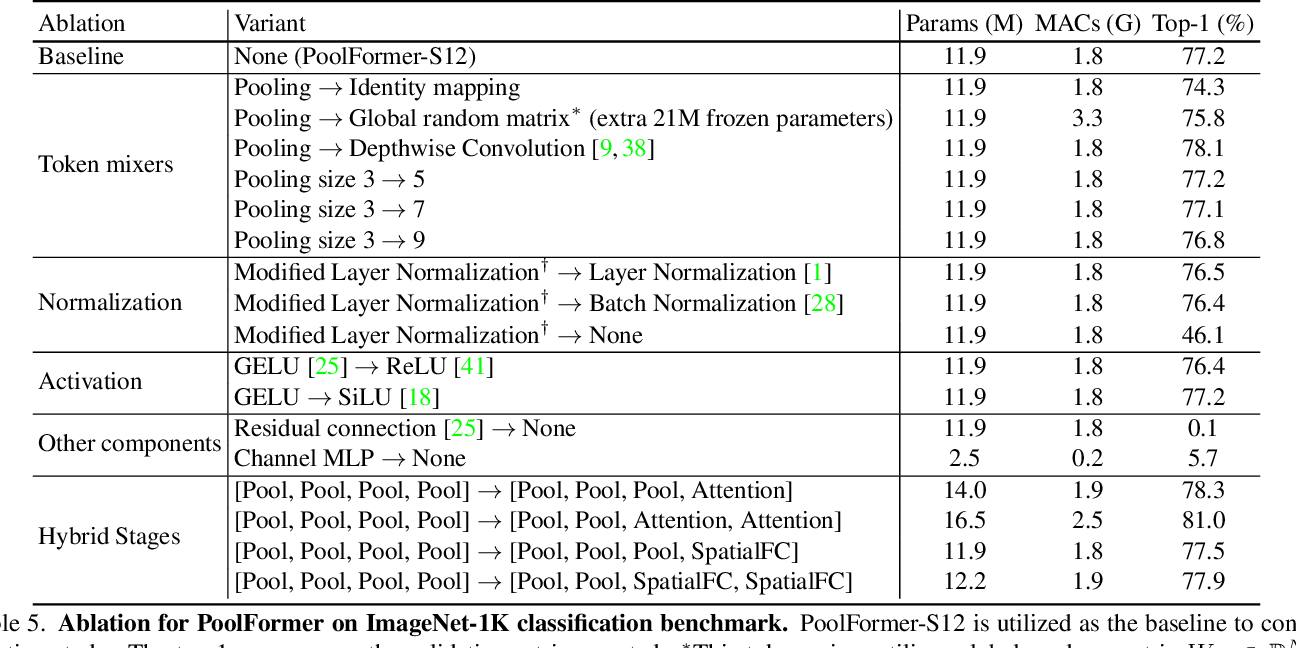

Table 5 from MetaFormer is Actually What You Need for Vision Semantic

Based on the extensive experiments, we argue that metaformer is the key player in achieving superior results for recent transformer and mlp. Based on the extensive experiments, we argue that metaformer is the key player in achieving superior results for recent transformer and mlp. The paper shows that metaformer is. The paper shows that metaformer. Based on the extensive experiments,.

Table 6 from MetaFormer is Actually What You Need for Vision Semantic

Based on the extensive experiments, we argue that metaformer is the key player in achieving superior results for recent transformer and. Metaformer is a concept that abstracts from transformers without specifying the token mixer module. The paper shows that metaformer. The paper shows that metaformer is. Metaformer is a general architecture abstracted from transformers without specifying the token mixer.

Table 4 from MetaFormer is Actually What You Need for Vision Semantic

Metaformer is a general architecture abstracted from transformers without specifying the token mixer. The paper shows that metaformer. The paper shows that metaformer is. Based on the extensive experiments, we argue that metaformer is the key player in achieving superior results for recent transformer and mlp. Metaformer is a general architecture abstracted from transformers without specifying the token mixer.

[PDF] MetaFormer is Actually What You Need for Vision Semantic Scholar

The paper shows that metaformer. Metaformer is a general architecture abstracted from transformers without specifying the token mixer. Based on the extensive experiments, we argue that metaformer is the key player in achieving superior results for recent transformer and mlp. Metaformer is a concept that abstracts from transformers without specifying the token mixer module. It achieves competitive performance on.

PoolFormer MetaFormer Is Actually What You Need for Vision

Based on the extensive experiments, we argue that metaformer is the key player in achieving superior results for recent transformer and mlp. Metaformer is a concept that abstracts from transformers without specifying the token mixer module. Metaformer is a general architecture abstracted from transformers without specifying the token mixer. Based on the extensive experiments, we argue that metaformer is the.

MetaFormer Is Actually What You Need for Vision Papers With Code

Based on the extensive experiments, we argue that metaformer is the key player in achieving superior results for recent transformer and. Metaformer is a general architecture abstracted from transformers without specifying the token mixer. The paper shows that metaformer. The paper shows that metaformer is. Metaformer is a general architecture abstracted from transformers without specifying the token mixer.

Figure 2 from MetaFormer is Actually What You Need for Vision

Metaformer is a concept that abstracts from transformers without specifying the token mixer module. It achieves competitive performance on. The paper shows that metaformer is. Based on the extensive experiments, we argue that metaformer is the key player in achieving superior results for recent transformer and mlp. Based on the extensive experiments, we argue that metaformer is the key player.

Metaformer Is A General Architecture Abstracted From Transformers Without Specifying The Token Mixer.

Based on the extensive experiments, we argue that metaformer is the key player in achieving superior results for recent transformer and mlp. The paper shows that metaformer. Based on the extensive experiments, we argue that metaformer is the key player in achieving superior results for recent transformer and mlp. The paper shows that metaformer is.

Metaformer Is A Concept That Abstracts From Transformers Without Specifying The Token Mixer Module.

It achieves competitive performance on. Metaformer is a general architecture abstracted from transformers without specifying the token mixer. Based on the extensive experiments, we argue that metaformer is the key player in achieving superior results for recent transformer and.

![[PDF] MetaFormer is Actually What You Need for Vision Semantic Scholar](https://figures.semanticscholar.org/57150ca7d793d6f784cf82da1c349edf7beb6bc2/500px/4-Table1-1.png)